Distributed Systems

Section III: Distributed Systems Fundamentals

April 9, 2026

What Is a Distributed System?

Working definition: Multiple nodes that communicate over a network to appear as one system to users.

- Examples: web apps with external auth, multiplayer games, file sync, P2P sharing.

- Key properties: resistant to independent failures and unreliable networks; shared ownership.

- Design goal: deliver correct, available service despite those limits.

Client–Server: Centralized Index and Control

Definition: Clients request data or service from a centralized server (or cluster) that holds authority.

- Strengths:

- Simple mental model

- Easier to govern and enforce policy

- Fast, centralized search and indexing

- Weaknesses:

- Control and failure are concentrated (single point of failure)

- Scaling hotspots (the server can become a bottleneck)

- Real-world pattern: Centralized discovery combined with direct data transfer (e.g., DNS, traditional web apps).

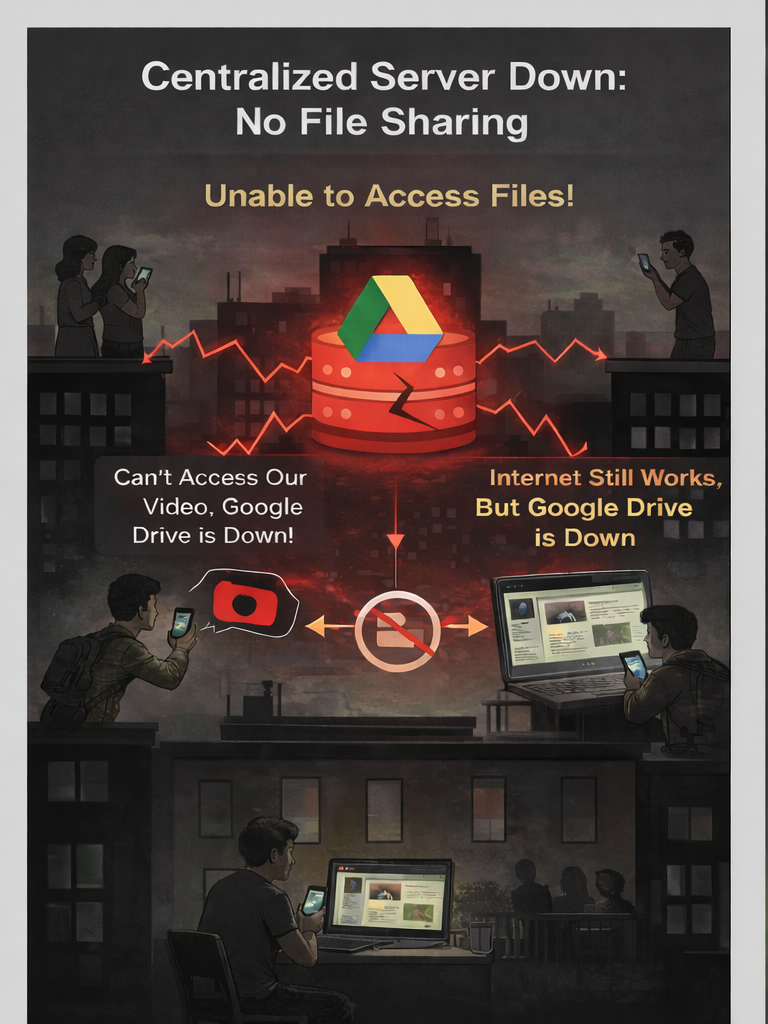

Blackout at 8 PM: Can We Still Share?

- Imagine an outage of major internet services (AWS, Google, Cloudflare, etc.).

- Your phone has data; your home internet works.

- You can’t reach Google, but you ccould share a file directly with your neighbor - but how?

- Can we still share without a central server?

- What if the internet/cell service are completely down?

Centralized vs. P2P

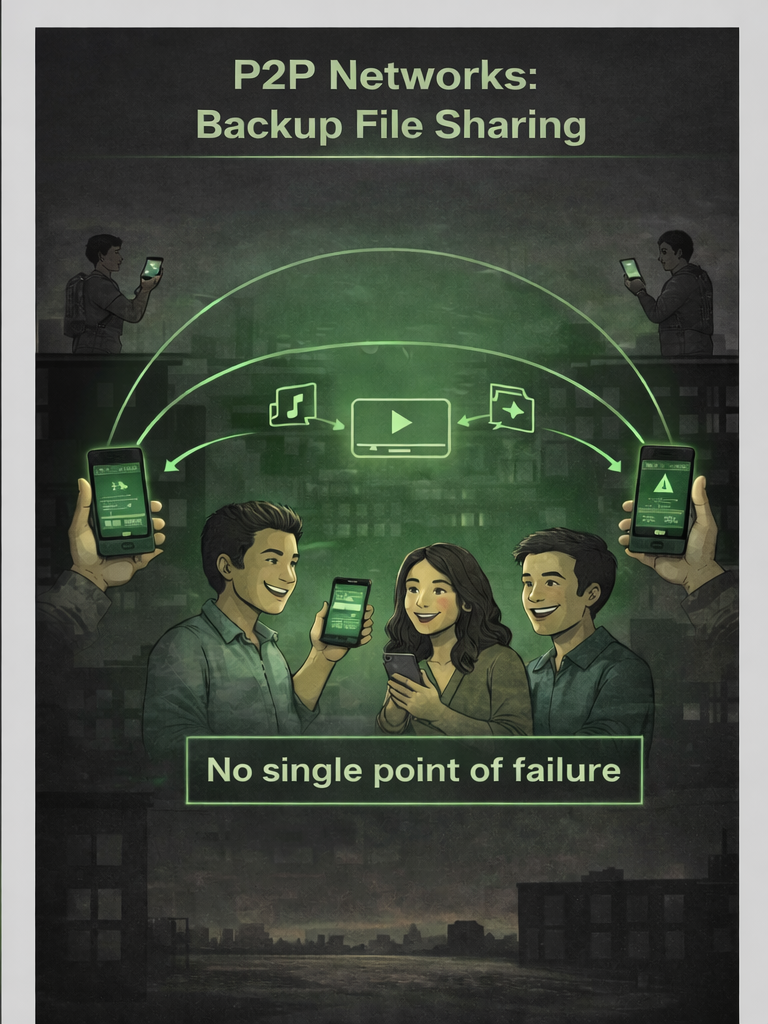

This is the core idea of a Peer-to-Peer (P2P) network.

- P2P networks have been around for decades and were central to early file-sharing systems.

- ARPANet (1966) \(\rightarrow\) early packet-switched network that helped shape the Internet

- Usenet (1979) \(\rightarrow\) early distributed discussion system widely associated with the early Internet era

- DNS (1983) \(\rightarrow\) naming infrastructure, essential to the world wide web

- They do not rely on a central server to work!

Centralized vs. P2P

Takeaway

P2P overlays are often less efficient than a centralized hub because messages may travel through more hops and coordination is harder. That extra complexity buys robustness: no single chokepoint controls availability, censorship, or total failure.

Centralized vs. Decentralized: Trade‑offs

Centralized

- Clear control and governance

- Strong consistency and observability

- Single point of failure and policy risk

Decentralized (P2P)

- No central chokepoint; resilient to takedown

- Autonomy for participants; local control

- Higher coordination cost; variable consistency

Why Distribute? Motivations and Risks

Motivations

- Scale out capacity and storage

- Place compute near users to reduce perceived delay

- Fault isolation and graceful degradation

- Autonomy between teams or organizations

Risks

- Coordination complexity and new failure modes

- Latency and bandwidth limits

- Security, trust, and heterogeneous administration

Distributed Failures

- Node-level, service-level, and network-level failures are different in scope and impact.

- Node-level failures affect one machine or process.

- Service-level failures break a shared application or dependency used by many nodes.

- Network-level failures partition links, racks, zones, or regions and can disrupt multiple services at once.

- Hidden external dependencies can turn a small fault into a major outage.

- Failures may be contained with isolation, timeouts, and graceful degradation.

Distributed Computing and Blockchain

- Blockchains are inherently distributed systems.

- A local-only blockchain would be little more than a personal log; the value comes from shared state across many machines.

- Bitcoin combined distributed networking, cryptography, and incentives into a system with no central operator.

- Understanding failure, propagation, and coordination helps us understand blockchain design constraints.

Availability, MTTF, and MTTR

- Availability ≈ (time the service is operational) ÷ (total time users need it).

- MTTF (Mean Time To Failure): average time a system runs before failing.

- MTTR (Mean Time To Repair): average time to recover and restore service after failure.

- To improve availability: increase MTTF (fail less often) and reduce MTTR (recover faster).

- Core design levers: retry failed operations and replicate critical components.

The Eight Fallacies

- What they are: A checklist of common bad assumptions engineers make about networks in distributed systems.

- Why they matter: These assumptions cause fragile designs, outages, security gaps, and poor user experience.

- Blockchain relevance: Blockchains are distributed systems on hostile, unreliable networks, so each fallacy shows up in practice.

- Historical origin: The fallacies were developed over time at Sun Microsystems in the 1990s, largely from internal engineering experience.

- Attribution note: The first seven are often credited to L. Peter Deutsch; James Gosling is commonly credited with adding the eighth.

Think of these as a pre-flight checklist: before trusting a design, ask which fallacies it accidentally assumes.

Fallacy #1: The Network Is Reliable

- Fallacy: Messages will get through if we send them.

- Reality: Networks drop, duplicate, reorder, and delay packets; partial failure is normal.

- Failure mode: A payment API call times out after server-side success, and the client assumes failure.

- Design response: Use timeouts, retries with backoff, and idempotent operations with request IDs.

- Blockchain lens: Transaction and block propagation is best-effort, not guaranteed.

Where would we see this in Bitcoin or Ethereum propagation behavior?

Fallacy #2: Latency Is Zero

- Fallacy: Distance and round trips are negligible.

- Reality: Latency is unavoidable and accumulates across protocol steps.

- Failure mode: A chatty protocol with multiple back-and-forth exchanges causes visible user lag.

- Design response: Reduce round trips, batch requests, cache reads, and colocate tightly coupled services.

- Blockchain lens: Propagation delay affects mempool view, confirmation speed, and fork creation.

What user-facing blockchain behaviors are really latency artifacts?

Fallacy #3: Bandwidth Is Infinite

- Fallacy: We can broadcast freely without cost or congestion.

- Reality: Throughput is finite, shared, and uneven across participants.

- Failure mode: Flooding queries or large payload gossip saturates links and starves useful traffic.

- Design response: Compress data, cap message size, deduplicate inventory, and avoid blind broadcast.

- Blockchain lens: Bigger or more frequent messages disadvantage low-bandwidth nodes and strain decentralization, many blockchain efforts to minimize amount of data transmitted.

How can bandwidth pressure quietly centralize a network?

Fallacy #4: The Network Is Secure

- Fallacy: Peers and links are trustworthy by default.

- Reality: Networks are adversarial; spoofing, eclipse attempts, and malicious peers exist.

- Failure mode: A node accepts biased peer data and gets a distorted view of the network.

- Design response: Authenticate data, diversify peers, validate independently, and enforce rate limits.

- Blockchain lens: Signatures protect authenticity, but not peer honesty or availability; public blockchains must handle malicious nodes and network connections.

What attacks remain possible even with strong cryptographic signatures?

Fallacy #5: Topology Doesn’t Change

- Fallacy: Node membership and paths stay stable.

- Reality: Churn is constant; nodes join, leave, reboot, and move.

- Failure mode: Static peer assumptions cause partitions or stalled gossip during churn events.

- Design response: Continuous peer discovery, health-based eviction, and resilient rebroadcast policies.

- Blockchain lens: Gossip and peer discovery have to tolerate constant churn - nodes are always leaving or joining the network.

What breaks if your design assumes a stable peer list?

Fallacy #6: There Is One Administrator

- Fallacy: One authority controls policy, upgrades, and operations.

- Reality: Real systems span teams and organizations with different incentives, timelines, and governance.

- Failure mode: Incompatible upgrades or policy divergence split behavior across clients or operators.

- Design response: Version negotiation, staged rollouts, explicit governance, and backward compatibility windows.

- Blockchain lens: Permissionless/public networks coordinate upgrades without a single administrator.

Who gets to decide protocol changes in a decentralized network?

Fallacy #7: Transport Cost Is Zero

- Fallacy: Moving data has negligible monetary and resource cost.

- Reality: Egress, relay, storage, and compute costs shape architecture viability.

- Failure mode: High relay or egress burden pushes smaller operators offline, reducing decentralization.

- Design response: Efficient relay protocols, pruning or light modes, and incentive-aware resource budgeting.

- Blockchain lens: Node operating cost directly affects participation and diversity.

Who pays for “free” decentralization in practice?

Fallacy #8: The Network Is Homogeneous

- Fallacy: All nodes run similar hardware, software, and links.

- Reality: Heterogeneity is the norm across clients, OSes, devices, and connection quality.

- Failure mode: Assumptions tied to one stack cause interoperability bugs and uneven behavior.

- Design response: Protocol conformance testing, feature negotiation, and graceful fallback paths.

- Blockchain lens: There is no oversight on who can join, and that means making no assumptions about the devices connecting; multi-client diversity improves resilience but increases compatibility burden.

When does client diversity increase safety, and when does it increase risk?

The Eight Fallacies of Distributed Computing

- The transport network is reliable.

- Latency is zero.

- Bandwidth is infinite.

- The system network is secure.

- Topology doesn’t change.

- There is one administrator.

- Transport cost is zero.

- The network is homogeneous.

Case Study: Napster

Napster was an early 2000s P2P file-sharing network that caused significant controversy by enabling widespread music piracy.

Famously shut down in 2001 after a court ruling found it liable for contributory copyright infringement.

- Napster’s network featured a hybrid P2P model consisting of:

- centralized index: users register file metadata with Napster’s servers.

- Peer-to-peer transfer: actual files move directly between users.

- Result: fast search, but central control and a central point of failure.

Case Study: Gnutella & “Flooding”

![]()

-

An early P2P file-sharing network that showed the pros and cons of an unstructured, “flooding” design.

How Gnutella Worked:

- Join: A new peer (a “servent”) connects to one or more known peers.

- Search: To find a file, the peer sends a

Querymessage to all its neighbors. - Flood: Those neighbors send the

Queryto their neighbors, and so on. A “Time-to-Live” (TTL) counter stops the flood from going on forever. - Respond: If a peer has the file, it sends a

QueryHit(a “Here it is!”) message back along the same path the query came from.

Problems:

- Massive Traffic: A single query could create a “broadcast storm,” flooding the network with duplicate messages.

- Poor Scaling: The network couldn’t grow large without being overwhelmed by search traffic.

Gnutella’s Fix: Supernodes & “Smart Flooding”

To solve its scaling problem, Gnutella 0.6 created a two-level hierarchy. This idea is central to how blockchains work today.

The Gnutella 0.6 Model

- Supernodes (or “Ultrapeers”): Peers with good bandwidth and uptime. They acted as powerful hubs, indexing the files of all the weaker peers connected to them.

- Leaf Nodes: Regular users (like on a home PC). They would connect only to a Supernode and send their searches only to that Supernode.

The “Smart Flood”

- A Leaf Node sends a query to its Supernode.

- The Supernode checks its own index of files from all its other leaves.

- If no match is found, the Supernode only floods the query to other Supernodes.

This was a huge improvement. It stopped millions of weak leaf nodes from participating in the network-clogging flood.

The Blockchain Link

This two-tier model from gnutella is the direct ancestor of modern blockchains.

Supernode \(\rightarrow\) Full Node

- A powerful, always-on computer.

- Downloads and validates every transaction and every block.

- Acts as a hub for the network, serving data to weaker peers.

Leaf Node \(\rightarrow\) Light Client

- Your mobile phone wallet or hardware wallet.

- Doesn’t download the whole blockchain.

- Connects to a trusted Full Node to query network

Gossip in a Blockchain Network

- In many blockchains, this two-tier network uses gossip to spread new information.

- When you send a transaction, your node does not flood the whole network at once.

- It forwards the transaction to a small set of peers, who validate it and relay it onward if it is new.

- This is a controlled flood built for spreading new transactions and blocks quickly across a changing network.

Scientific Applications

SETI@home’s “Screensaver Supercomputer”

- Millions of volunteers lent idle CPU time to analyze radio telescope data to aid SETI in its search of extraterrestrial intelligence.

- Work units were distributed; results validated redundantly to detect errors and cheating.

- Showed how P2P/volunteer computing can reach supercomputer‑scale.

Volunteer Computing Pipeline: BOINC

Generalized platform: fetch work → compute → upload results → validate → credit

Redundancy and quorum rules detect errors and malicious results.

Widely used beyond SETI for science projects.

BOINC: Berkeley Open Infrastructure for Network Computing.

Communications Application: Skype’s P2P Telephony

- Early Skype used supernodes and end‑to‑end encrypted P2P paths.

- Login/authentication used central servers; after login, a supernode P2P overlay provided directory lookups, signaling, and NAT traversal via relays when needed.

- Demonstrated P2P for real‑time voice at global scale.

Finance Application: Bitcoin’s P2P Idea

Goal: electronic cash directly between peers without a trusted intermediary.

Network: nodes broadcast transactions and blocks over a P2P overlay.

We defer consensus and ledger internals to later lectures.

Summary

- What distributed systems are

- Client–server vs. P2P

- Centralized vs. decentralized trade-offs

- The fallacies

- Resilience patterns: retries, replication, graceful degradation

- Historical applications (Napster, Gnutella, SETI@home/BOINC, Skype, Bitcoin)

References

Distributed Systems — Army Cyber Institute — April 9, 2026