Trust and P2P

Section III: Distributed Systems Fundamentals

April 9, 2026

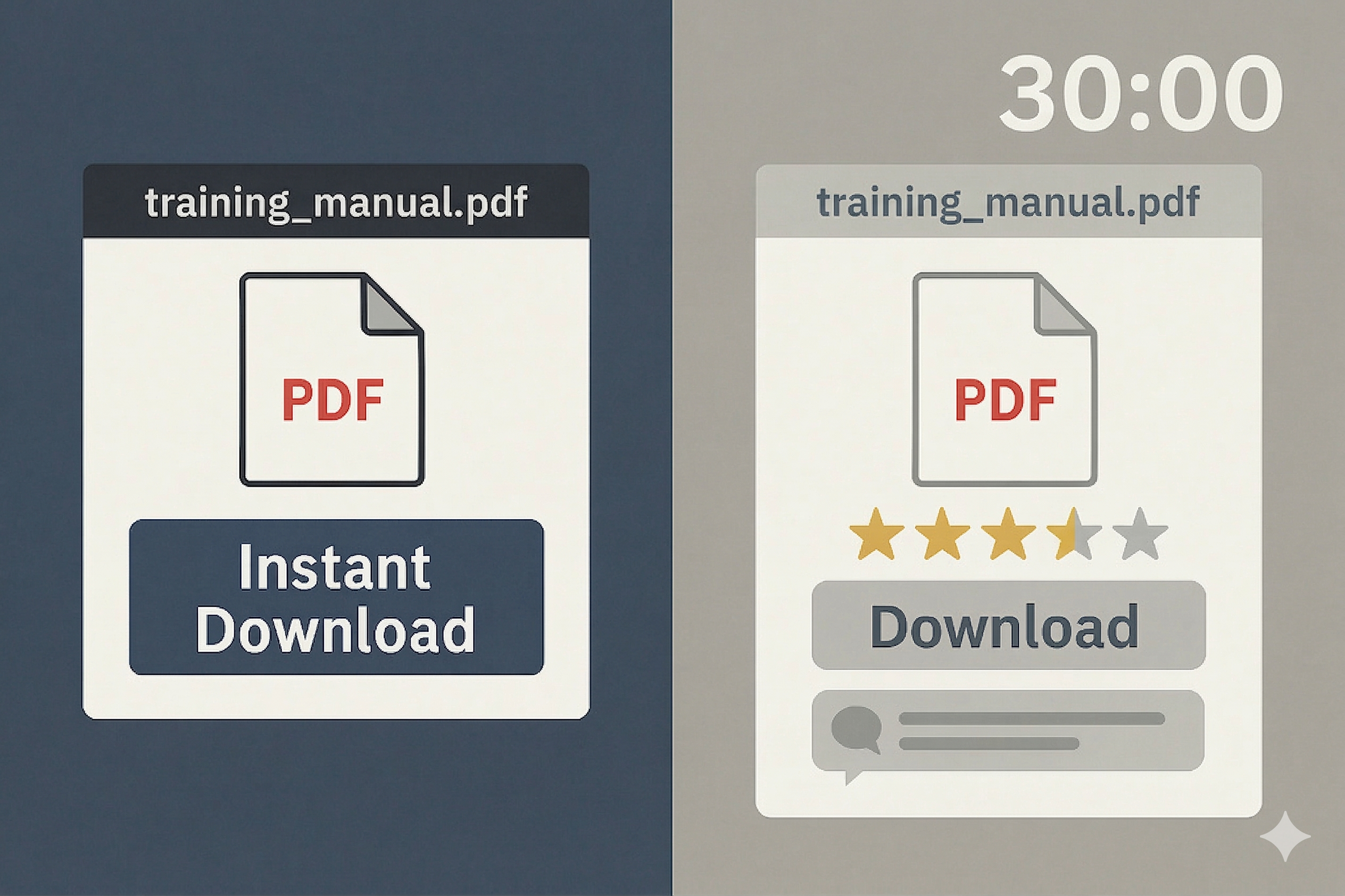

Intro: “The Bad Download”

- You need a copy of a specific training manual PDF. Your file got corrupted the night before a graded assignment

- You find two sources on a peer-to-peer file sharing network:

- Peer A has no download history, no ratings, and just appeared, but the file is available right now

- Peer B has months of activity, dozens of verified successful transfers, and multiple positive ratings, but they’re in demand and you’ll wait an estimated 30 minutes

- Your assignment is due in 45 minutes. Do you gamble on the unknown peer to save time, or wait for the one with a track record?

What Is “Trust” in P2P?

- Trust: expectation a peer will behave as promised

- Reputation: summary of past behavior used to predict trust

- Why it matters: no central authority; strangers must cooperate

Cryptographic vs. Social Trust

Cryptographic trust

- Verifies identity and message integrity

- Prevents tampering/impersonation

- Needs keys, signatures, certificates

Social (behavioral) trust

- Rates honesty/reliability over time

- Uses feedback and reputation

- Predicts who will deliver correctly

A valid signature proves who sent a message, but it says nothing about whether they will behave honestly. These layers solve separate problems.

Direct vs. Indirect Trust

- Direct trust: first-hand experiences with a peer

- Example: You’ve downloaded files from Peer A five times, and every file verified correct. Your confidence in Peer A is high.

- Strength: High confidence, but limited reach. You can only rate peers you’ve personally interacted with.

- Indirect trust: recommendations from others you trust

- Example: Peer B, whom you trust, vouches for Peer C, someone you’ve never met. You tentatively extend trust to C.

- Strength: Extends your reach across the network, but must be discounted by the recommender’s own credibility.

- Combining both: Use direct trust where you have it; fall back on indirect trust (weighted by recommender quality) for strangers. Direct experience should quickly override hearsay as relationships form.

Properties of Reputation Systems

- Accuracy: Scores correlate with actual future behavior; honest peers trend high, dishonest peers trend low. Scores should reflect verified outcomes, not just volume of ratings.

- Scalability: Computation, storage, and communication costs remain manageable as the network grows. Updates should be incremental, not require global recomputation.

- Robustness: The system resists manipulation. Sybil floods, collusion rings, and slander campaigns cannot easily distort scores. No single actor or small group can dominate the signal.

- Bootstrap: New participants start with low or neutral trust and must earn reputation through verified interactions. This prevents free-ride entry while still allowing honest newcomers to climb.

PeerTrust: How Trust Is Calculated

- Feedback score: how satisfied peers were with past interactions.

- Feedback scope: number of transactions behind the rating.

- Rater credibility: weight ratings by how trustworthy the reviewer is.

- Context factors: consider the type of transaction and the surrounding community norms.

Note

Simplified formula:

\[T(peer) = \sum_{i} \underbrace{S(i)}_{\text{satisfaction}} \times \underbrace{Cr(i)}_{\text{rater credibility}} \times \underbrace{TF(i)}_{\text{context weight}}\]

Each rater \(i\)’s feedback is scaled by how credible they are and how relevant the transaction type is. Low-credibility raters and low-stakes transactions contribute less to the final score.

PeerTrust: Handling Manipulation

How do PeerTrust’s factors defend against manipulation?

- Credibility weighting vs. ballot stuffing: Five fake accounts all rate each other 5 stars. But none of them have established credibility, so their ratings carry near-zero weight. The inflation attempt fizzles.

- Context sensitivity vs. reputation milking: A peer completes 50 small file transfers successfully, then delivers a corrupted critical file. The high-stakes failure is weighted far more heavily, so the score drops sharply despite the long “good” streak.

- Feedback scope vs. thin history: A newcomer with 3 perfect ratings looks good, but 3 data points aren’t enough for confidence. PeerTrust’s scope parameter keeps scores tentative until evidence accumulates.

EigenTrust: From Local Opinions to Global Scores

- Begin with each peer’s local satisfaction ratios from direct interactions.

- Normalize per rater to form a consistent trust matrix.

- Iteratively propagate trust values through the network until scores converge and stabilize.

Simplified formula:

Where: \(s_{ij}\) = net satisfaction (successful minus failed interactions between peers \(i\) and \(j\)); \(c_{ij}\) = normalized local trust that \(i\) places in \(j\); \(C\) = the matrix of all \(c_{ij}\) values; \(\vec{t}\) = the global trust vector; \(k\) = iteration round.

- Local trust: \(c_{ij} = \frac{\max(s_{ij},\; 0)}{\sum_k \max(s_{ik},\; 0)}\) . Normalize each peer’s positive experiences into a probability distribution

- Global trust: \(\vec{t}^{\,(k+1)} = C^T \cdot \vec{t}^{\,(k)}\) . Iteratively propagate trust through the network until scores stabilize

- Anchor mixing: blend in a small weight toward pre-trusted peers at each step to prevent drift

The result is a single trust vector \(\vec{t}\) where each entry is peer \(j\)’s global reputation.

EigenTrust: Anchors and Collusion Resistance

How do EigenTrust’s anchors defend against manipulation?

- Anchors vs. collusion: Ten nodes form a clique and rate each other highly. But trust only propagates globally if it traces back to anchor nodes, and anchors never endorsed the clique. Their mutual praise stays contained within their cluster; the rest of the network never sees high scores for them.

- Normalization vs. flooding: Each node’s trust values are normalized to probabilities, so total influence stays bounded. A node that rates 100 peers highly doesn’t give each one more trust than a node that rates 5. Influence is spread, not amplified.

- Low initial trust vs. rebranding: A misbehaving node discards its identity and rejoins fresh. But newcomers start with near-zero trust weight. There’s no shortcut back to influence without sustained, verified good behavior.

PeerTrust vs. EigenTrust: Summary

Now that we’ve examined each model individually:

PeerTrust: Local / Personalized

- Multi-factor: feedback score, scope, rater credibility, context

- Scores are per-peer and context-aware, tailored to your perspective

- Fast reaction to your own evidence

- Narrow coverage; vulnerable to echo chambers

EigenTrust: Global / Network-Wide

- Normalizes local trust; iterates to converge on global scores

- Pre-trusted anchors ground the computation and resist collusion

- Broad coverage of the network; isolates universally bad actors

- Needs careful anchoring and damping

Note

Many real systems blend both: start with a global baseline (EigenTrust-style) to stay safe among strangers, then personalize with local context (PeerTrust-style) as your own interactions accumulate.

Trust but Verify: The Complete Trust Loop

A complete trust decision has three steps:

- Cryptographic verification. Use hashes and digital signatures to confirm data integrity and sender identity. This is the crypto trust layer from earlier: it answers ”was this tampered with?” but not ”is this person honest?”

- Reputation-based selection. Choose peers with established track records for the task type. Use PeerTrust-style context weighting for tasks you understand, EigenTrust-style global scores for strangers.

- Close the loop. Feed verified outcomes (good and bad) back into the reputation system. Verified results should carry more weight than unverified opinions, because they’re grounded in facts rather than assertions.

Note

This is the “trust but verify” pattern: let reputation aim you toward good peers, let verification confirm the result, then update your aim for next time.

Threats: Sybil Attacks

The attack: A single adversary creates many fake identities to gain outsized influence: flooding feedback, capturing neighbor slots, or surrounding a target.

Scale matters: 1 new identity vs. 1,000:

- One newcomer is straightforward, but 1,000 newcomers from one attacker can overwhelm by volume: mutual endorsements, vote flooding, or neighbor capture.

How each system defends:

- PeerTrust: Newcomers have zero credibility, so their ratings carry near-zero weight. Even 1,000 low-credibility raters endorsing each other produce negligible score inflation. Credibility must be earned through verified interactions.

- EigenTrust: Trust only propagates globally through anchor nodes. A cluster of 1k Sybils recycling praise among themselves gains no endorsement from anchors, so their trust stays contained. Normalization bounds total influence per node.

The shared principle: influence must be earned, not manufactured. Cheap identities can flood a network, but both systems ensure those identities start with near-zero weight and can only gain influence through sustained, verified good behavior.

Threats: Rebranding Attacks

The attack: A node with a bad reputation discards its identity and rejoins as a “new” participant with a clean slate.

- Unlike Sybil attacks (many identities at once), rebranding is a serial strategy: one identity at a time, discarded when tainted.

- The attacker hopes that “new” means “neutral” rather than “suspicious.”

How each system defends:

- PeerTrust: Newcomers start with low feedback scope (few transactions) and zero rater credibility. Even after rebranding, the attacker must complete many verified interactions before gaining meaningful influence, the same cost as the first time.

- EigenTrust: New identities carry near-zero weight in the global trust vector. Without endorsement from anchor nodes, the rebranded identity has no shortcut to high scores. The cost of rebuilding reputation is the defense.

Note

The shared principle: there is no free ride for new identities. Trust must be earned through sustained, verified good behavior, making rebranding a slow and costly strategy.

Threats: Collusion

The attack: A group of 10 peers forms a ring. They rate each other 5 stars on every interaction and rate a competing honest peer 1 star, trying to boost themselves and bury the competition.

How each system defends:

- PeerTrust: The ring members must each have independently earned credibility before their ratings carry weight. Ten low-credibility peers rating each other produces negligible score changes. Think: 10 strangers writing each other glowing LinkedIn recommendations on day one.

- EigenTrust: Trust only propagates globally through anchor nodes. The ring’s internal praise circulates within the cluster but never reaches anchors, so their scores stay contained. Normalization ensures that even high-volume raters can’t amplify each other beyond their bounded share.

The shared principle: praise without external endorsement stays local. Colluders can inflate each other’s ratings, but without credibility (PeerTrust) or anchor-traced trust (EigenTrust), that inflation never reaches the broader network.

Threats: Slander

The attack: Five malicious peers target an honest node by flooding it with false 1-star ratings after every interaction, trying to destroy its reputation.

How each system defends:

- PeerTrust: Your own direct experience with the target overrides distant negatives. If you’ve had 20 successful interactions with the target, five bad ratings from strangers barely move the needle. Credibility weighting further discounts the attackers if they lack established track records.

- EigenTrust: The slanderers’ influence on the global trust vector is proportional to their own trust scores. If they’re low-trust nodes, their negative feedback propagates weakly. The target’s score remains anchored by endorsements that trace back to pre-trusted nodes.

The shared principle: your own experience outweighs distant accusations. Slander fails because low-credibility attackers have limited influence, and direct positive interactions with the target carry more weight than secondhand negatives.

Threats: On–Off (Reputation Milking)

- Pattern: an actor builds trust through good behavior, then exploits that reputation to cheat before disappearing.

- Mitigation: apply recency weighting so recent behavior matters most, and use anomaly detection to flag sudden shifts.

- Accountability: penalize severe or high-impact failures more heavily to discourage short-term exploitation.

Real-world examples:

- Reddit karma farming: Users post harmless, crowd-pleasing content for months to accumulate karma. Once the account looks credible, they sell it or pivot to pushing spam, scams, or propaganda, exploiting the trust signals they built.

- Gaming / social account sales: Players grind a high-level or high-reputation account in a game or social network, then sell it. The buyer leverages the reputation for malicious activity that the original owner’s history didn’t predict.

The shared principle: trust must be renewed, not banked. Recency weighting ensures that past good behavior cannot indefinitely shield present exploitation. Recent actions always speak louder than old ones.

Key Considerations in System Design

- Self-policing: rely on decentralized mechanisms for moderation, storage, and computation.

- Privacy-aware: preserve user anonymity and data protection while maintaining accountability.

- Efficient: minimize messaging, bandwidth, and state overhead for scalability.

- Robust: resist Sybil, collusion, slander, and on–off attacks through cost and credibility mechanisms.

- Welcoming bootstrap: provide safe, fair onboarding so newcomers can build reputation gradually.

Micro Lab: Trust Simulation

Key Takeaways

- Cryptography ≠ behavior: Cryptographic tools (signatures, hashes, certificates) prove identity and integrity, but they cannot predict whether a peer will act honestly. Reputation fills that gap by modeling observed outcomes over time.

- Direct + indirect trust: Direct trust comes from your own verified experiences and is the strongest signal. Indirect trust extends your reach through recommendations, but must be weighted by the recommender’s credibility.

- PeerTrust provides multi-factor, context-aware scoring. Feedback is weighted by rater credibility, transaction scope, and context. Scores are personalized to your perspective and update quickly.

- EigenTrust provides anchored global reputation. Local trust values are normalized and iteratively propagated to produce network-wide scores. Pre-trusted anchors ground the computation and resist collusion.

- Design for adversaries: Sybil attacks, collusion, slander, rebranding, and on-off exploitation are expected threats. Defend with: no free ride for newcomers, anchor-based propagation, credibility weighting, recency decay, and verification loops.

Check‑on‑Learning

- Why can’t cryptography alone solve honest behavior?

- When would you prefer global over local trust?

- Name one mitigation for Sybil and explain why it works.

- How does transaction context change trust scoring?

- What’s your verify‑before‑trust plan for a critical download?

References

Trust and P2P — Army Cyber Institute — April 9, 2026